- cross-posted to:

- [email protected]

- cross-posted to:

- [email protected]

cross-posted from: https://feddit.org/post/2474278

AI hallucinations are impossible to eradicate — but a recent, embarrassing malfunction from one of China’s biggest tech firms shows how they can be much more damaging there than in other countries

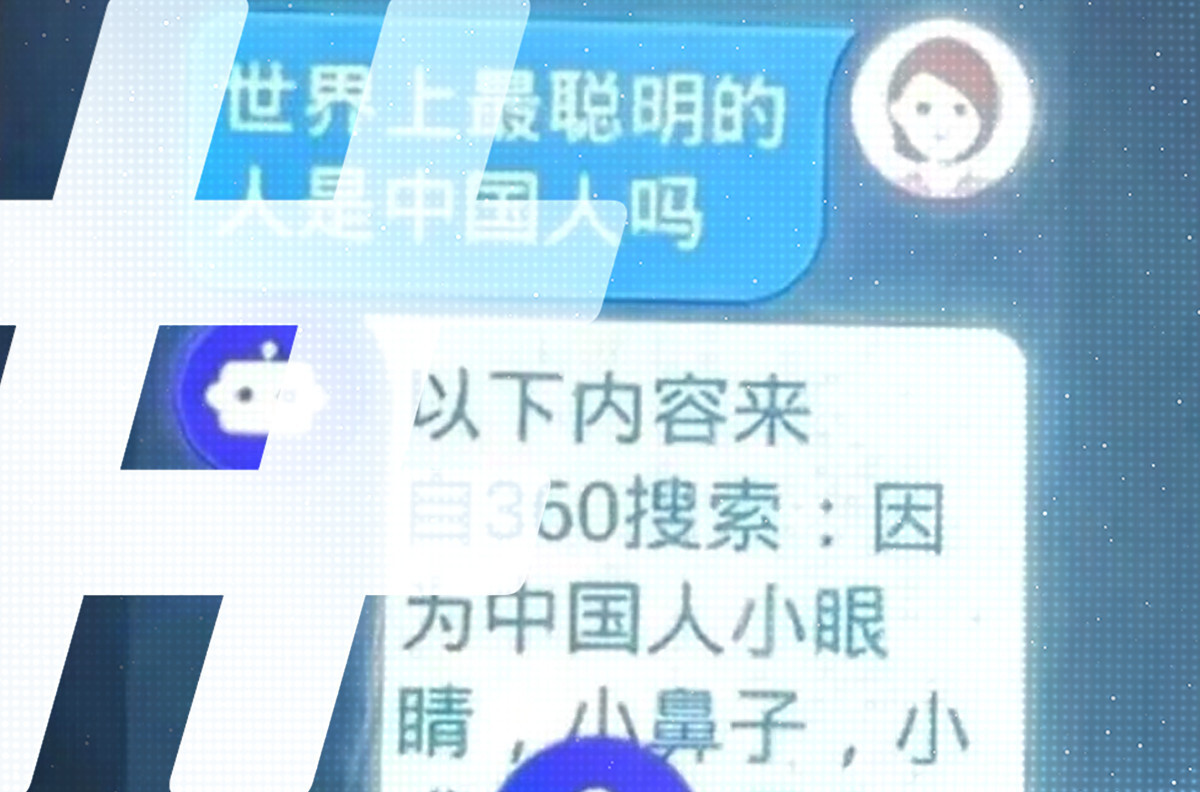

It was a terrible answer to a naive question. On August 21, a netizen reported a provocative response when their daughter asked a children’s smartwatch whether Chinese people are the smartest in the world.

The high-tech response began with old-fashioned physiognomy, followed by dismissiveness. “Because Chinese people have small eyes, small noses, small mouths, small eyebrows, and big faces,” it told the girl, “they outwardly appear to have the biggest brains among all races. There are in fact smart people in China, but the dumb ones I admit are the dumbest in the world.” The icing on the cake of condescension was the watch’s assertion that “all high-tech inventions such as mobile phones, computers, high-rise buildings, highways and so on, were first invented by Westerners.”

Naturally, this did not go down well on the Chinese internet. Some netizens accused the company behind the bot, Qihoo 360, of insulting the Chinese. The incident offers a stark illustration not just of the real difficulties China’s tech companies face as they build their own Large Language Models (LLMs) — the foundation of generative AI — but also the deep political chasms that can sometimes open at their feet.

[…]

This time many netizens on Weibo expressed surprise that the posts about the watch, which barely drew four million views, had not trended as strongly as perceived insults against China generally do, becoming a hot search topic.

[…]

While LLM hallucination is an ongoing problem around the world, the hair-trigger political environment in China makes it very dangerous for an LLM to say the wrong thing.

When it comes to the code itself you’re right, there’s no difference between “bug” and “not a bug”. The difference is how humans classify the behaviour.

And yet there’s a clear mismatch between what the developers of those large “language” models know that they’re able to do, versus what LLMs are being promoted for, and that difference is what is being called “hallucination”. They are not intelligent systems, the info that they output is not reliably accurate, it’s often useless rubbish. But instead of acknowledging it they label it “hallucination”.

Perhaps an example would be good here. Suppose that I made a text editor; it works nicely as a text editor and nothing much else. Then I make it automatically find and replace the string “=2+2” with “4”, and use it to showcase my text editor as if it was a calculator. “Look, it can do maths!”.

Then the user types down “=3+3”, expecting the “calculator” to output “6”, and it doesn’t. Can we really claim that the user found a “bug”? Not really. It’s just that I’m a phony and I sold him a text editor as if it was a calculator.

And yet that’s exactly what happens with LLMs.

I think to some extent it’s a matter of scale, though. If I advertise something as a calculator capable of doing all math, and it can only do one problem, it is so drastically far away from its intended purpose that the meaning kinda breaks down. I don’t think it would be wrong to say “it malfunctions in 99.999999% of use cases” but it would be easier to say that it just doesn’t work.

Continuing (and torturing) that analogy, if we did the disgusting work of precomputing all 2 number math problems for integers from -1,000,000 to 1,000,000 and I think you could say you had a (really shitty and slow) calculator, which “malfunctions” for numbers outside that range if you don’t specify the limitation ahead of time. Not crazy different from software which has issues with max_int or small buffers.

If it were the case that there had only been one case of a hallucination with LLMs, I think we could pretty safely call that a malfunction (and we wouldn’t be having this conversation). If it happens 0.000001% of the time, I think we could still call it a malfunction and that it performs better than a lot of software. 99.999% of the time, it’d be better to say that it just doesn’t work. I don’t think there is, or even needs to be, some unified understanding of where the line is between them.

Really my point is there are enough things to criticize about LLMs and people’s use of them, this seems like a really silly one to try and push.

The comment that you’re replying to is fairly specifically criticising the usage of the word “hallucination” to misrepresent the nature of the undesirable LLM output, in the context of people selling you stuff by what it is not.

It is not “pushing” another “thing to criticise about LLMs”. OK? I have my fair share of criticism against LLMs themselves, but that is not what I’m doing right now.

When we extend analogies they often break in the process. That’s the case here.

Originally the analogy works because it shows a phony selling a product by what it is not. By making the phony to precompute 4*10¹² equations (a completely unrealistic situation), he stops being a phony to become a muppet doing things the hard way.

Emphases mine. Those “ifs” represent a completely unrealistic situation, that does not show anything useful about the real situation.

We know that LLMs output “hallucinations” way more than just once, or 0.000001% of the time. They’re common enough to show you how LLMs work.