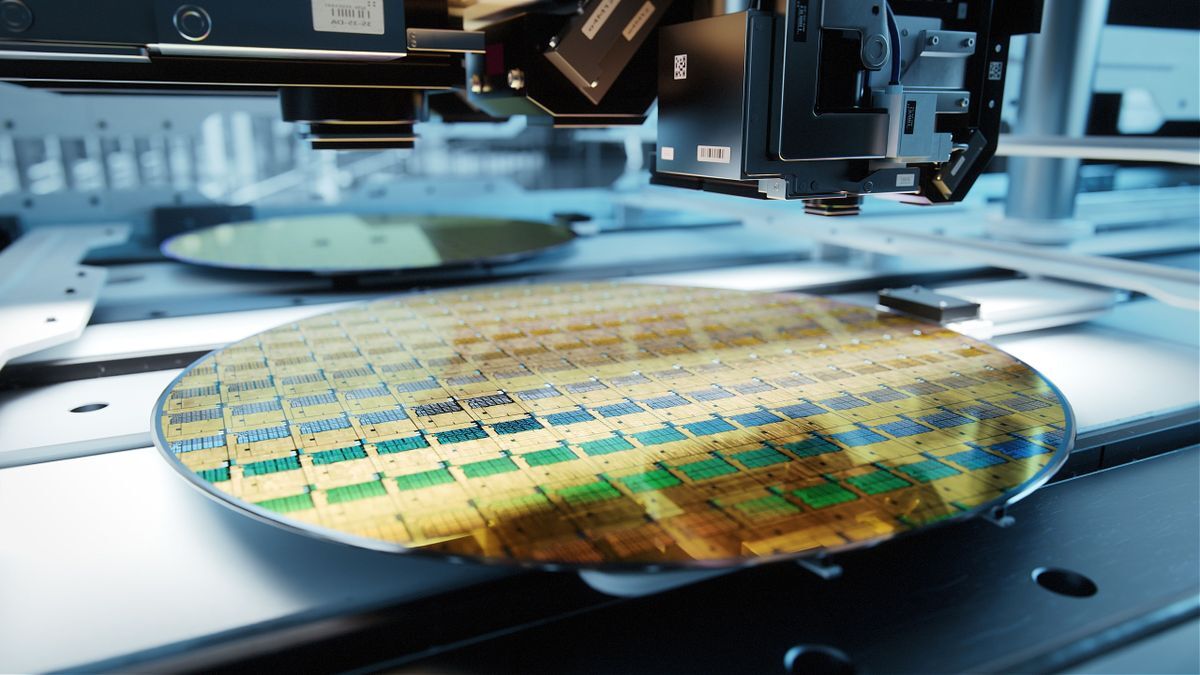

Firm predicts it will cost $28 billion to build a 2nm fab and $30,000 per wafer, a 50 percent increase in chipmaking costs as complexity rises::As wafer fab tools are getting more expensive, so do fabs and, ultimately, chips. A new report claims that

Use that money to speed the process of quantum computing so it will make these transistor chips obsolete

Quantum computing wouldn’t make these transistors obsolete.

Quantum computing is only really good at very specific types of calculations. You wouldn’t want it being used for the same type of job that the CPU handles.

So it will not run vim?

Quantum computers are only useful where you don’t deliberately want decoherence. Decoherence means an operation when you erase a bit, like for example when you overwrite a memory bit with a new value. This requires dissipation of energy and interaction with the outside world to reject the heat of the calculation to. While in principle a quantum computer can do a calculation that a classical computer can do, it would not be useful unless it was observed and this happens pretty much every time a logic gate output flips in a classical computer.

I know you’re joking, but I feel like answering anyway.

I’m sure you could get it to do that if you forced that through engineering, but it wouldn’t be anywhere near as efficient as just using a CPU.

CPUs need to be able to handle a large number of instructions quickly one after the next, and they have to do it reliably. Think of a CPU as an assembly line, there are multiple stages for each instruction, but they are setup so that work is already happening for the next instruction at each step (or clock cycle). However, if there’s a problem with one of the stages (or a collision) then you have to flush out the entire assembly line and start over on all of the work among all of the stages. This wouldn’t be noticeable at all to the user since the speed of each step/clock cycle is the speed of the CPU in GHz, and there are only a few stages.

Just like how GPUs are excellent at specific use cases, quantum processing will be great at solving complex problems very quickly. But, compared to a CPU handling the mundane every day instructions, it would not handle this task well. It would be like having a worker on the assembly line that could do everything super quickly… but you would have to take a lot more time to verify that the worker did everything right, and there would be a lot of times that things were done wrong.

So, yeah, you could theoretically use quantum processing for running vim… but it’s a bad idea.

Quantum computing is useless in most cases because of how fragile and inaccurate it can be, due in part to the near zero temperatures they are required to operate at.