- cross-posted to:

- [email protected]

- [email protected]

- cross-posted to:

- [email protected]

- [email protected]

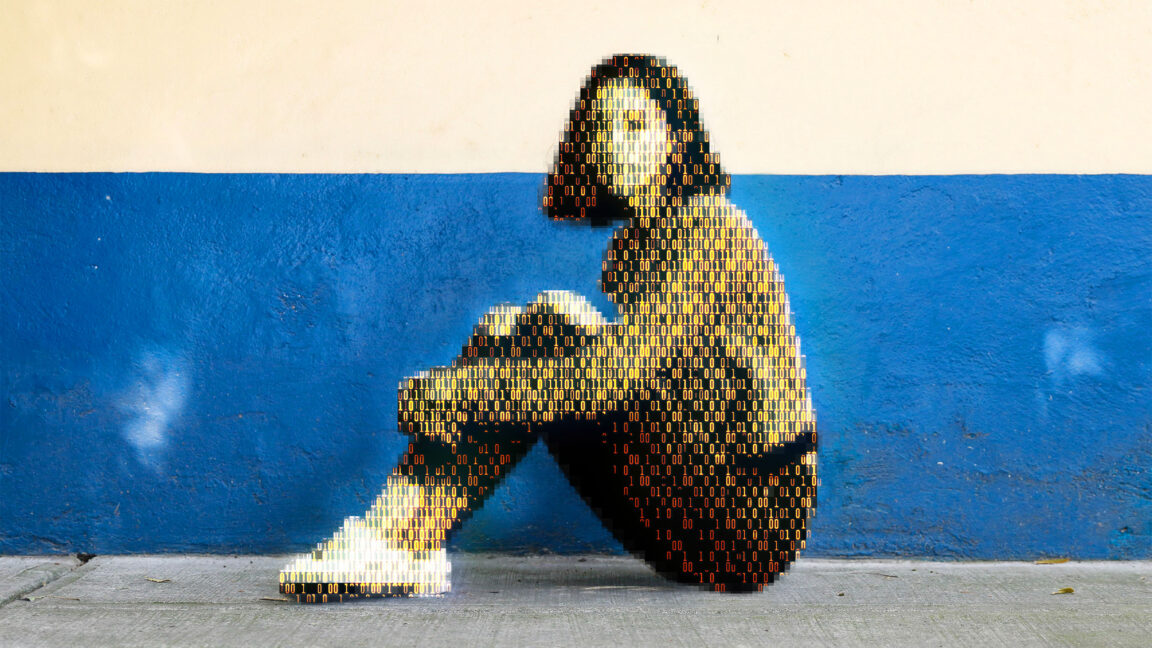

Today, a prominent child safety organization, Thorn, in partnership with a leading cloud-based AI solutions provider, Hive, announced the release of an AI model designed to flag unknown CSAM at upload. It’s the earliest AI technology striving to expose unreported CSAM at scale.

I thought being able to do that was already a thing. This is designed to do the opposite.

I know, I know… bad actors and such.

…but if simple posession defines who a bad actor is…

The irony of this never ceases to amaze me.