- cross-posted to:

- [email protected]

- [email protected]

- cross-posted to:

- [email protected]

- [email protected]

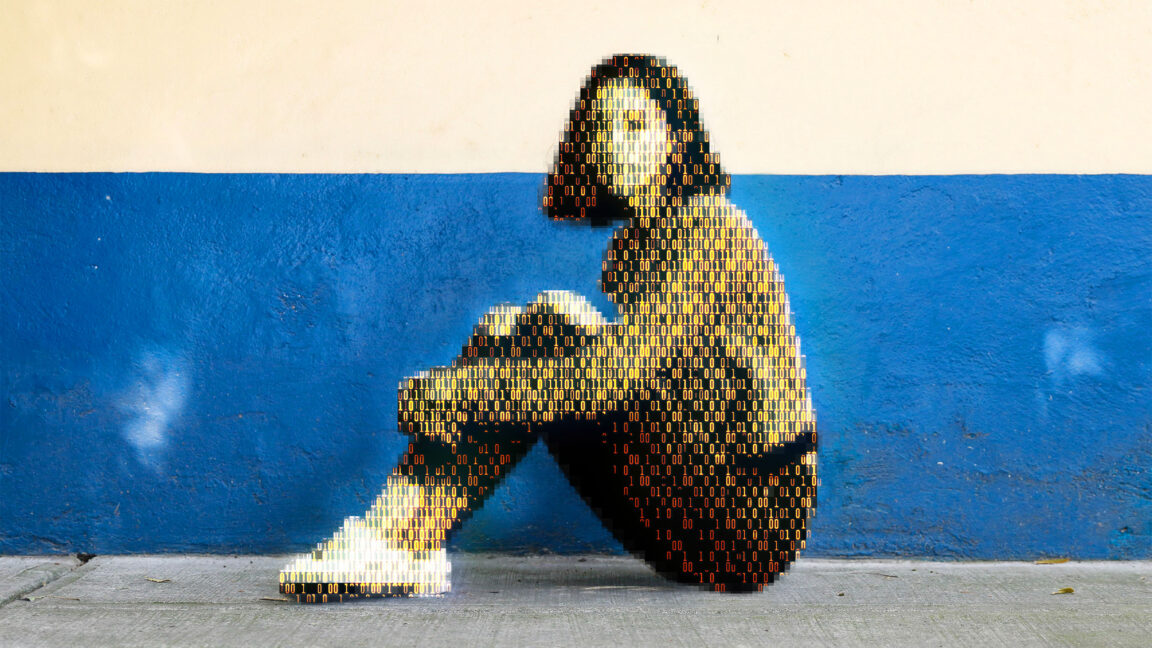

Today, a prominent child safety organization, Thorn, in partnership with a leading cloud-based AI solutions provider, Hive, announced the release of an AI model designed to flag unknown CSAM at upload. It’s the earliest AI technology striving to expose unreported CSAM at scale.

Nobody would have been looking directly at the source data. The FBI or whoever provides the dataset to approved groups, but after that you just say “use all the images in this folder” and it goes. But I don’t even know if they actually provide real full-resolution images, or just perceptual hashes, or downsampled images.

And while it’s possible to use the dataset to generate new images assuming the training data had full-res images, like I said, I know they investigate the people making the request before allowing access. And access is probably supervised and audited.