- cross-posted to:

- [email protected]

- [email protected]

- cross-posted to:

- [email protected]

- [email protected]

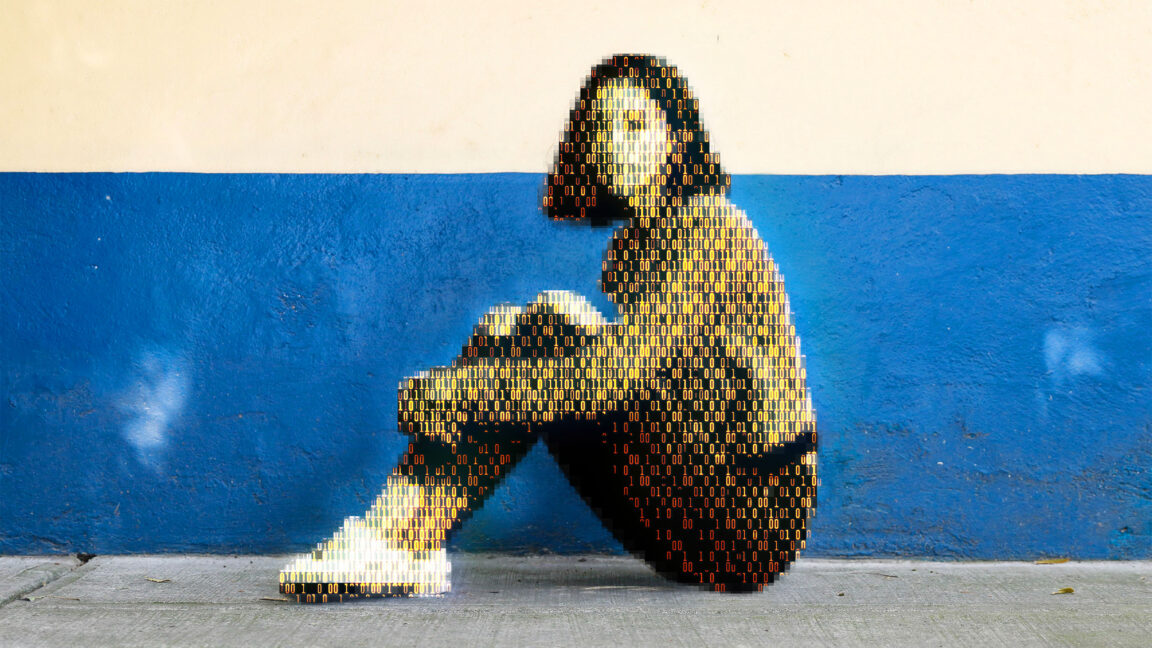

Today, a prominent child safety organization, Thorn, in partnership with a leading cloud-based AI solutions provider, Hive, announced the release of an AI model designed to flag unknown CSAM at upload. It’s the earliest AI technology striving to expose unreported CSAM at scale.

I think image generators in general work by iteratively changing random noise and checking it with a classifier, until the resulting image has a stronger and stronger finding of “cat” or “best quality” or “realistic”.

If this classifier provides fine grained descriptive attributes, that’s a nightmare. If it just detects yes or no, that’s probably fine.